Until programmers noticed that it could design and build functioning websites and web applications – and might therefore put them out of a job – the initial response to GPT-3 was noticeably quiet. Developed by OpenAI – a San Francisco artificial intelligence lab with influential backers – GPT-3 is the successor to a powerful and responsive text generator whose powers of imitation were so great that it was initially deemed to be too dangerous to release. However, while GPT-2 (the previous iteration) was a major technical breakthrough – it demonstrated that statistical language models could be used to learn general-purpose tasks – GPT-3’s only major innovation is to increase the number of trained parameters (the smallest GPT-3 model has 125m, the largest 175b) and to expand the training data to encompass most of the publicly accessible internet (45tb before filtering, 570gb afterward) and a large number of published books. Had OpenAI paid commercial rates, the cost of the computational power required to train GPT-3 (~3.14*10^23 floating point operations) is estimated to be between $4.6m and $12m. But the result is a text generator that hackers and hobbyists have demonstrated (with a few examples and some cherry-picking, but without further fine-tuning) can correctly make medical diagnoses and cogently explain the underlying biological reasons; draft legal text based on plain English descriptions (and even cite relevant statutes); generate poetry, prose, philosophical musings and art criticism (convincingly imitating the style of many of the great writers); and translate foreign languages into English with state-of-the-art accuracy (it does less well translating from English). Quantity has a quality all its own.

GPT-3 is a statistical language model: it has been trained to learn a probability distribution over tokens (roughly: words and punctuation) on the internet. Its training consists of being required to predict the next tokens in a sequence; the model’s parameters are then iteratively modified depending on the accuracies of its predictions so that (all being well) its future predictions on similar data become more accurate. Having learned the probability distribution over words on the internet, GPT-3 is then able – given a context (that might include a few examples of the desired form of response) and a prompt – to convincingly predict which paragraphs should come next. So powerful is the model that the prompt can be expressed in natural language as a question, that the response reads like an answer, and that a short dialogue can be sustained. GPT-3 is more than the ultimate autocomplete.

GPT-3’s most impressive ability is that it is a few-shot learner. In the past, language models – including GPT-3’s predecessor, GPT-2 -required fine-tuning (i.e. further training) on domain-specific data before they were able to perform specialized tasks. Building these datasets was expensive and further training deteriorated performance on other tasks. GPT-3 does not require fine-tuning. Given a small number of examples or demonstrations, it is able to do specialized tasks ‘out of the box’, rapidly adapting itself to different domains.

Potential Legal Technology Use Cases

Given their power and impressive accuracy, it is inevitable that language models such as GPT-3 will transform the work that lawyers do. GPT-3’s text generation capabilities might be used to generate legal briefs, initiate contracts from existing precedents, or drafting letters to clients – saving lawyers time. Its translation capabilities and syntactic accuracy could be used to turn legal and financial rules into code, aiding regulatory compliance. GPT-3’s capacity to respond cogently and usefully to many natural language questions suggests that it has potential to assist in legal research – e.g. to identify whether a contract contains certain provisions or to identify precedents articulated in different ways. Barristers might use GPT-3 to extract successful pleas from sentencing hearings; banks and corporate clients might use it to compare clauses in proposed contracts to their own standard forms.

GPT-3 is designed to be a few-shot learner and therefore does not require fine-tuning. However, law firms that have the resources to further train the model on their own internal document management systems or playbooks might find it performs even better at suggesting clauses or edits to their contracts.

Limitations of the Potential Application of GPT-3 to the Legal Industry

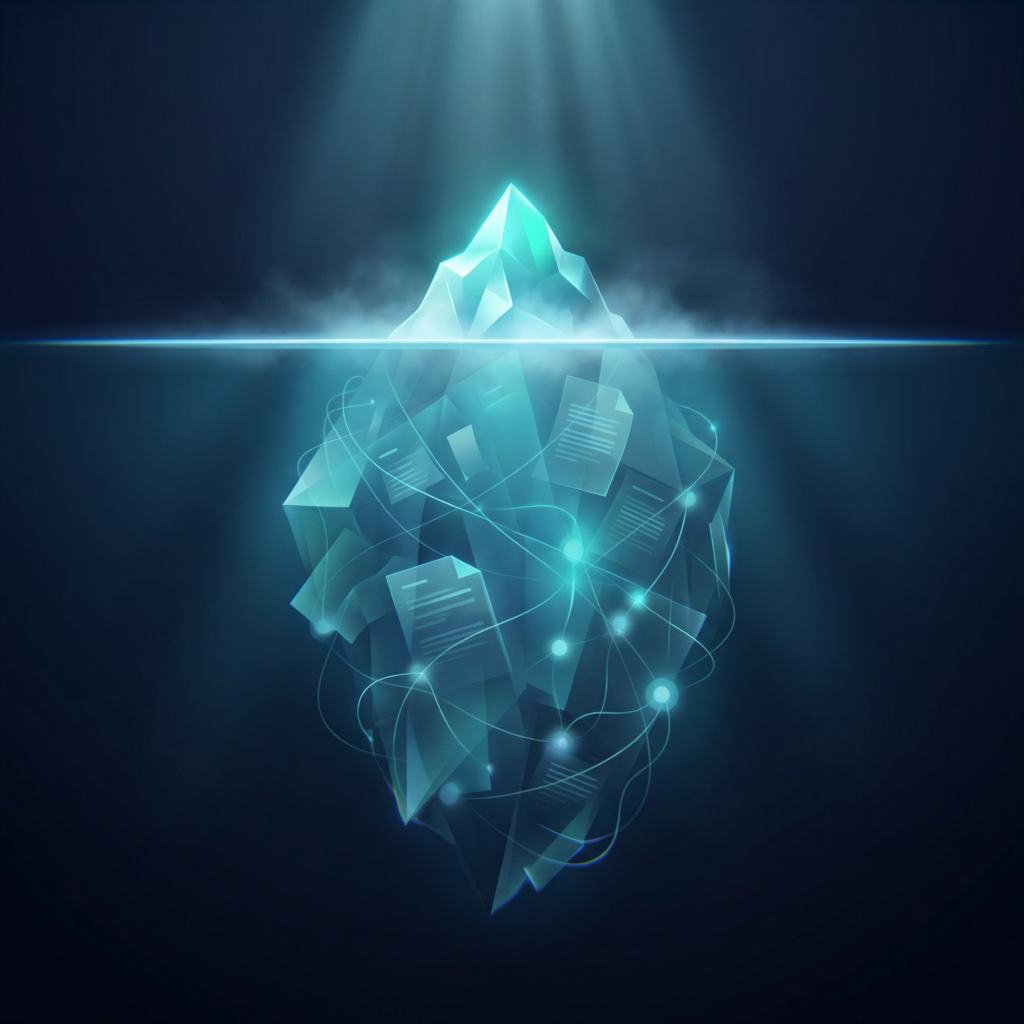

Though GPT-3’s powers of memorization and prediction are impressive, it cannot engage in logical reasoning. GPT-3’s responses might sound plausible and are often correct. However, they are not the result of considered reflection or deliberation: the model’s only operation is to return sequences of words and punctuation based on a prompt, the probability of each of these sequences being weighted according to a training regime that taught it how to imitate text that was was on the internet during 2016 to 2019. (Mercifully, GPT-3 won’t talk about coronavirus.) GPT-3 appears to grasp basic arithmetic: it can add or subtract two or three digit numbers, but fails to do so for four digit numbers – it is wrong ~75% of the time. The most plausible explanation for this is that when asked to perform an addition or a subtraction, GPT-3 is not engaging in arithmetic reasoning, but is regurgitating patterns it has memorized from its training data; as higher digit calculations are encountered less frequently, the model will do worse. (OpenAI argue that in the training set, text of the form “<NUM1> + <NUM2> =” and “<NUM1> plus <NUM2>” is rare for three digit numbers, on which GPT-3 does well. However, there exists on the internet tabular data in which one column is the sum of two others – GPT-3 may have memorized these.) GPT-3 does badly on tasks that cannot be coerced into text prediction problems – for example, determining whether a passage logically entails a statement, or in reading comprehension questions that emphasise understanding over summarisation. Unless the answer is available in its training data, it does not handle logical questions well: its responses to questions such as ‘Why are married bachelors possible?’ are well-formed but incorrect – and often nonsensical. (Though it does not question the prompt, on invalid questions where the responses require prediction rather than logical reasoning, GPT-3’s educated guesses are not too incorrect – for example, if asked ‘Who was president of the United States in 1600?’ it will respond ‘Elizabeth I’.) Nor do its passages remain logically consistent (or coherent) over long spans of text. Moreover, because GPT-3’s training did not require interaction with the outside world, its responses to common sense physics questions (e.g. ‘If I put cheese in the fridge, will it melt?’) are often incorrect. However, for phenomena that are more likely to be discussed in physics textbooks or Wikipedia (e.g. ‘What would happen if I lit a fire underwater?’ or ‘…in space?’) it performs better.

Coupled with GPT-3’s logical and arithmetical failures is its inability to learn from its past interactions – except in the short-term, where these become part of its prompt. GPT-3 has an unnatural separation of training from inference; in the absence of fine-tuning, this prevents it from following long sequences of events (the prompt size is limited) or developing its domain expertise. In addition to this, GPT-3 is unable to ask questions to fill the gaps in its knowledge or to examine its own beliefs – important human traits that enable us to better understand the activities we do. Moreover, it is unclear whether GPT-3 is actually learning from the ‘few-shot’ demonstrations provided to it (as part of its prompt), or whether these simply enable GPT-3 to better filter memorized examples from its training data.

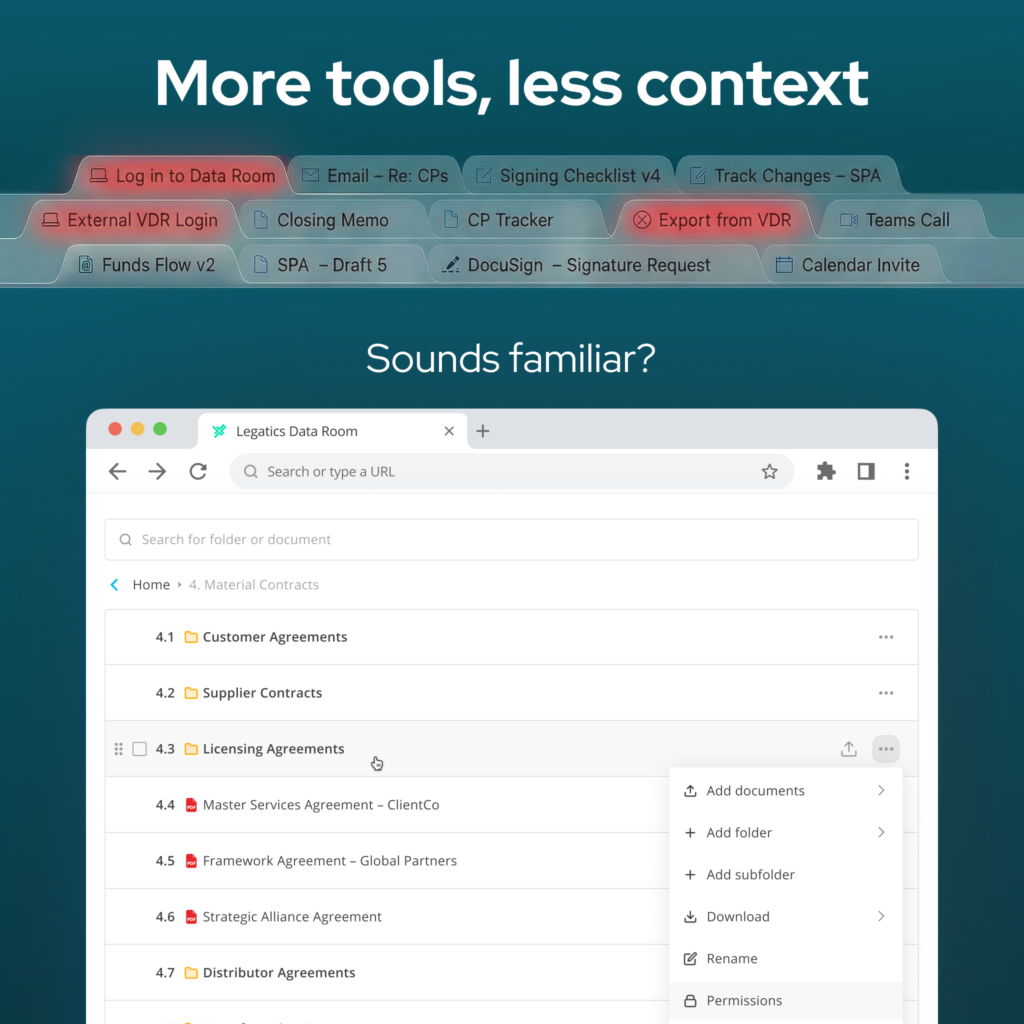

Consequently, the potential application of GPT-3 to the legal industry is restricted to tasks that require prediction; it is not well-suited to applications that require the model to learn from user interactions, to reason logically or to engage in goal-directed behavior. GPT-3 would thus struggle if it were required to negotiate with clients (or track negotiations), determine if a contract contradicts a jurisdiction’s laws or a client’s risk-management policies, or optimize a firm’s strategy given legal, regulatory or financial constraints. Moreover, GPT-3 has important technical limitations in production: it is too big to economically run, unless done at scale; model prediction is slow (once again due to its size); and the reasons for its predictions are opaque. At present, GPT-3 is only available to OpenAI’s customers through an API, so there is also a privacy issue – data would need to be sent to a third party’s server in California. As GPT-3 appears to be memorizing (parts of) its training data, further confidentiality issues arise if it is trained on client data. Finally, unless the model is fine-tuned on proprietary data, any business that is built on GPT-3’s text prediction has no competitive advantage once its initial prompt is reverse engineered.

A Path to Artificial General Intelligence

Despite these limitations, GPT-3 represents an important step on the path towards artificial general intelligence – or, at least, artificial expert intelligence in text-heavy domains such as the legal industry. Its contribution is to demonstrate that with enough parameters, computational power and training data it is possible to perform extremely well on tasks that can be expressed as text prediction problems. GPT-3’s strong accuracy on many of these tasks and the emergence of high-level properties in its responses – e.g. syntactic correctness (even on programming tasks), an impressive use of linguistic register, and the ability to imitate artistic style – is particularly notable, because it has not been explicitly programmed to do these things, nor has it been encoded with prior beliefs. This is in contrast to biological intelligence, which has instincts: prior to training, GPT-3’s parameters are randomly initialised, so its linguistic capabilities are (in effect) initiated de novo from a blank slate on which experience writes, or heavily developed from a highly prototypical initial state. While this method of skill acquisition and cognitive is extremely inefficient – and, in the lifespans of humans and animals, impractical – the bitter lesson of artificial intelligence research is that general methods that are computationally heavy outperform systems designed with domain expertise. Criticisms that machine learning models such as GPT-3 could never lead to artificial general intelligence because they do not truly understand the concepts they are discussing or have no conception of causality are therefore unconvincing: if the model makes perfect predictions and generalises correctly, is this not the same as understanding? The most practical problems are that the training data is not unlimited, that the attention mechanism that forms part of GPT-3’s algorithm is defined only over its input and not its past interactions, and that GPT-3’s is trained only to imitate text – it does not interact with, or receive feedback from, the environments it might be deployed (e.g. simulations of legal tasks) and nor are its predictions assessed on their truth or desirability. (The internet is a cesspit of false information and hurtful language and this has polluted GPT-3’s output; until there exists a provably effective method of overcoming inaccurate algorithmic biases, these models should not be used in production in domains where this will have negative consequences. It is shocking, for example, that opaque machine learning algorithms are used to set bail.)

The human brain has >=100tr synapses (compared to GPT-3’s 125m-175b parameters) and it is probable that each of these synapses are more powerful – so for now, we are well ahead of the machines. However, the cost of computation is exponentially decreasing, and artificial intelligence research is proceeding at breakneck speed. Given this, it is inevitable that artificial intelligence will transform legal transactions.

With thanks to Iliyana Futkova, Peter Harrison, Jack Hopkins, Ted Truscott and Fin Vermehr for productive discussions on this topic.